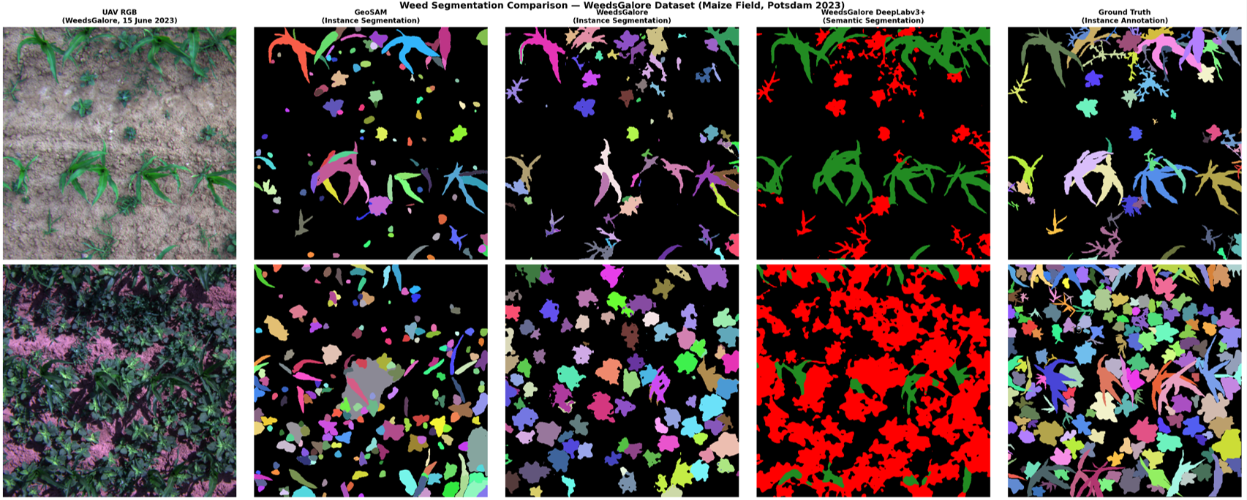

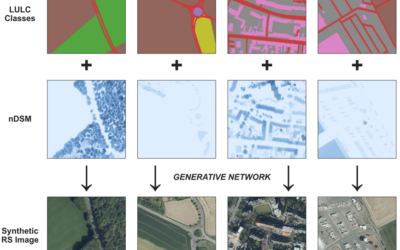

From the abstract: Effective weed management remains a key challenge in modern agriculture, where increasing food demand must be met without expanding agricultural land. This Innolab, conducted at the GFZ Helmholtz Centre Potsdam (Section 1.4 – Global Land Monitoring), explores automated weed segmentation using very high-resolution multispectral UAV imagery from the WeedsGalore dataset, collected in maize fields near Potsdam between 2023 and 2024. The project focused on three interconnected components. First, UAV data processing in Agisoft Metashape revealed that orthomosaic quality is highly sensitive to flight conditions and radiometric calibration, with visible artefacts and preprocessing trade-offs affecting data consistency. Second, a comparative evaluation of three deep learning segmentation models (GeoSAM, Mask2Former, and DeepLabV3+) highlighted differences between instance and semantic segmentation approaches. Across the same dataset, supervised models outperformed zero-shot methods for weed segmentation. While zero-shot results captured object boundaries effectively, they lacked class discrimination and performance degraded in scenes with high vegetation density. Lastly, a literature review on agricultural Vision-Language Models was conducted to analyse trends in datasets, architectures, and key limitations, and to identify a gap between remote sensing and multimodal agricultural machine learning research: no existing model currently combines language grounding with multispectral UAV-based weed segmentation. These findings point toward future research directions in developing multimodal approaches that integrate language understanding with high-resolution geospatial analysis for precision agriculture.

1st supervisors: Dr. Maninder Singh Dhillon

Host: GFZ Helmholtz Centre Potsdam (Section 1.4 – Global Land Monitoring)